Measuring light

From the moment plates started to be mass-produced, it became essential to quantify their sensitivity. In order to make the operator’s life easier, various light measurement systems were developed; barely practical, and frequently experimental, the photo-electric light meter would supersede them to great effect.

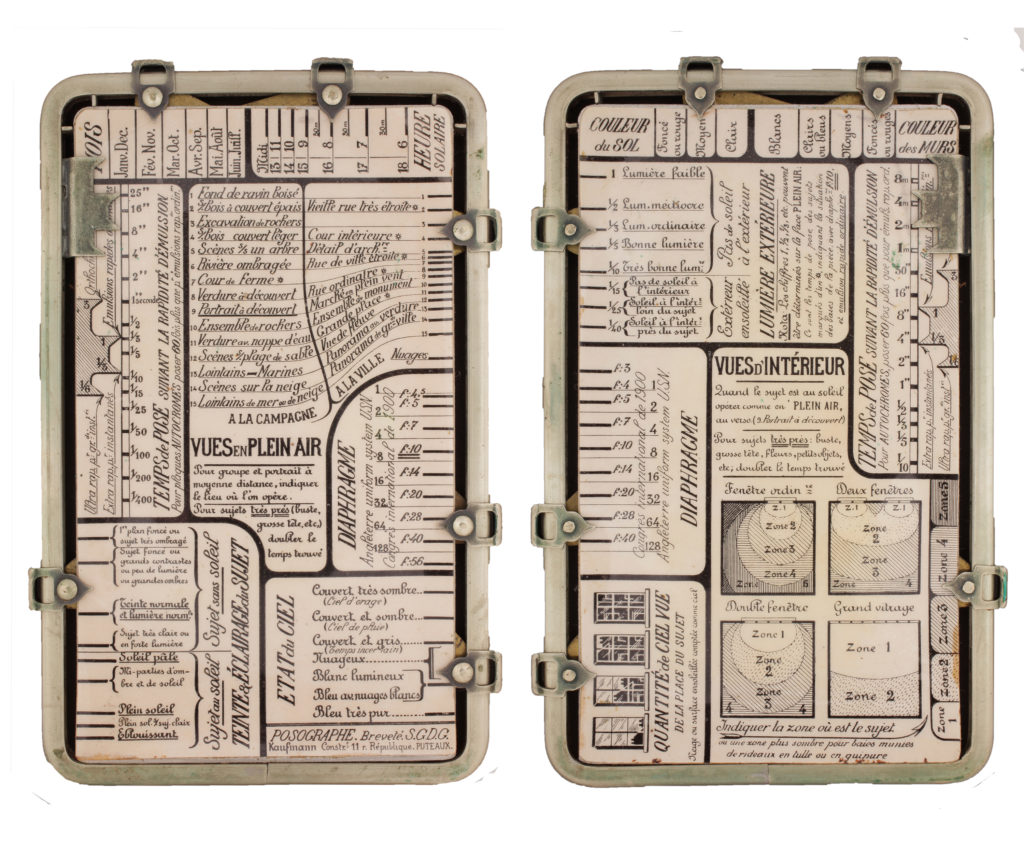

Exposure tables or calculators

In around 1880, Ferdinand Hurter, born in Schaffhouse (Switzerland) and Vero Charles Driffield, an Englishman, developed a procedure based on sensitometry linked to the introduction of a sensitivity index, which would remain in use up until today. Various types of tables made it possible to determine correct exposure time working on the basis of parameters, such as the type of subject, light conditions, latitude, season and time of day etc.

Actinometers

The founding principle of these devices worked by measuring the time taken by a photosensitive paper to reach a specified density. Work by Alfred Watkins around 1890 was the first to really enable this system to show its worth.

Extinction meters

A graduated filter or optical wedge was adjusted on the camera, pointing at the subject, until the moment when the brightest point on the subject just ceased to be visible. The luminosity index obtained was calculated using a scale in order to work out the exposure time. This method remained uncertain.

Photo-electric light meters

In February 1873, Willoughby Smith held a conference at the British Society of Telegraph Engineers, presenting information on the variation of the photo-electric conductivity of selenium, according to the amount of light received. This discovery led to various more or less unsuccessful research, since the electrical technology of the period was still in its infancy…we would have to wait until the 1930s to see serious results.